2.3 损失函数

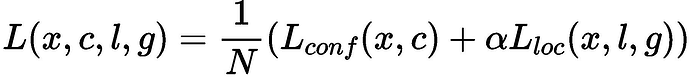

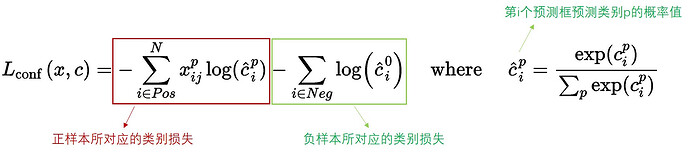

SSD算法的目标函数分为两部分:计算相应的预选框与目标类别的置信度误差(confidence loss, conf)以及相应的位置误差(locatization loss, loc):

其中:

N 是先验框的正样本数量;

c 为类别置信度预测值;

l 为先验框的所对应边界框的位置预测值;

g 为ground truth的位置参数

α 用以调整confidence loss和location loss之间的比例,默认为1。

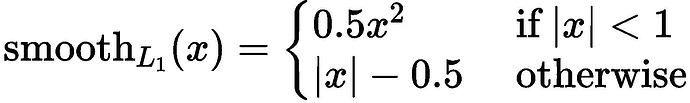

对于位置损失函数:

针对所有的正样本,采用 Smooth L1 Loss, 位置信息都是 encode 之后的位置信息。

对于置信度损失函数:

置信度损失是多类置信度(c)上的softmax损失。

Metrics

在SSD中,训练过程是不需要用到非极大值抑制(NMS),但当进行检测时,例如输入一张图片要求输出框的时候,需要用到NMS过滤掉那些重叠度较大的预测框。

非极大值抑制的流程如下:

- 根据置信度得分进行排序

- 选择置信度最高的比边界框添加到最终输出列表中,将其从边界框列表中删除

- 计算所有边界框的面积

- 计算置信度最高的边界框与其它候选框的IoU

- 删除IoU大于阈值的边界框

- 重复上述过程,直至边界框列表为空

import os

import stat

from mindspore import save_checkpoint

from mindspore.train.callback import Callback

import json

import numpy as np

from mindspore import Tensor

from pycocotools.coco import COCO

from pycocotools.cocoeval import COCOeval

from src.config import get_config

config = get_config()

def apply_eval(eval_param_dict):

net = eval_param_dict["net"]

net.set_train(False)

ds = eval_param_dict["dataset"]

anno_json = eval_param_dict["anno_json"]

coco_metrics = COCOMetrics(anno_json=anno_json,

classes=config.classes,

num_classes=config.num_classes,

max_boxes=config.max_boxes,

nms_threshold=config.nms_threshold,

min_score=config.min_score)

for data in ds.create_dict_iterator(output_numpy=True, num_epochs=1):

img_id = data['img_id']

img_np = data['image']

image_shape = data['image_shape']

output = net(Tensor(img_np))

for batch_idx in range(img_np.shape[0]):

pred_batch = {

"boxes": output[0].asnumpy()[batch_idx],

"box_scores": output[1].asnumpy()[batch_idx],

"img_id": int(np.squeeze(img_id[batch_idx])),

"image_shape": image_shape[batch_idx]

}

coco_metrics.update(pred_batch)

eval_metrics = coco_metrics.get_metrics()

return eval_metrics

def apply_nms(all_boxes, all_scores, thres, max_boxes):

"""Apply NMS to bboxes."""

y1 = all_boxes[:, 0]

x1 = all_boxes[:, 1]

y2 = all_boxes[:, 2]

x2 = all_boxes[:, 3]

areas = (x2 - x1 + 1) * (y2 - y1 + 1)

order = all_scores.argsort()[::-1]

keep = []

while order.size > 0:

i = order[0]

keep.append(i)

if len(keep) >= max_boxes:

break

xx1 = np.maximum(x1[i], x1[order[1:]])

yy1 = np.maximum(y1[i], y1[order[1:]])

xx2 = np.minimum(x2[i], x2[order[1:]])

yy2 = np.minimum(y2[i], y2[order[1:]])

w = np.maximum(0.0, xx2 - xx1 + 1)

h = np.maximum(0.0, yy2 - yy1 + 1)

inter = w * h

ovr = inter / (areas[i] + areas[order[1:]] - inter)

inds = np.where(ovr <= thres)[0]

order = order[inds + 1]

return keep

class COCOMetrics:

"""Calculate mAP of predicted bboxes."""

def __init__(self, anno_json, classes, num_classes, min_score, nms_threshold, max_boxes):

self.num_classes = num_classes

self.classes = classes

self.min_score = min_score

self.nms_threshold = nms_threshold

self.max_boxes = max_boxes

self.val_cls_dict = {i: cls for i, cls in enumerate(classes)}

self.coco_gt = COCO(anno_json)

cat_ids = self.coco_gt.loadCats(self.coco_gt.getCatIds())

self.class_dict = {cat['name']: cat['id'] for cat in cat_ids}

self.predictions = []

self.img_ids = []

def update(self, batch):

pred_boxes = batch['boxes']

box_scores = batch['box_scores']

img_id = batch['img_id']

h, w = batch['image_shape']

final_boxes = []

final_label = []

final_score = []

self.img_ids.append(img_id)

for c in range(1, self.num_classes):

class_box_scores = box_scores[:, c]

score_mask = class_box_scores > self.min_score

class_box_scores = class_box_scores[score_mask]

class_boxes = pred_boxes[score_mask] * [h, w, h, w]

if score_mask.any():

nms_index = apply_nms(class_boxes, class_box_scores, self.nms_threshold, self.max_boxes)

class_boxes = class_boxes[nms_index]

class_box_scores = class_box_scores[nms_index]

final_boxes += class_boxes.tolist()

final_score += class_box_scores.tolist()

final_label += [self.class_dict[self.val_cls_dict[c]]] * len(class_box_scores)

for loc, label, score in zip(final_boxes, final_label, final_score):

res = {}

res['image_id'] = img_id

res['bbox'] = [loc[1], loc[0], loc[3] - loc[1], loc[2] - loc[0]]

res['score'] = score

res['category_id'] = label

self.predictions.append(res)

def get_metrics(self):

with open('predictions.json', 'w') as f:

json.dump(self.predictions, f)

coco_dt = self.coco_gt.loadRes('predictions.json')

E = COCOeval(self.coco_gt, coco_dt, iouType='bbox')

E.params.imgIds = self.img_ids

E.evaluate()

E.accumulate()

E.summarize()

return E.stats[0]

class SsdInferWithDecoder(nn.Cell):

"""

SSD Infer wrapper to decode the bbox locations.

Args:

network (Cell): the origin ssd infer network without bbox decoder.

default_boxes (Tensor): the default_boxes from anchor generator

config (dict): ssd config

Returns:

Tensor, the locations for bbox after decoder representing (y0,x0,y1,x1)

Tensor, the prediction labels.

"""

def __init__(self, network, default_boxes, config):

super(SsdInferWithDecoder, self).__init__()

self.network = network

self.default_boxes = default_boxes

self.prior_scaling_xy = config.prior_scaling[0]

self.prior_scaling_wh = config.prior_scaling[1]

def construct(self, x):

pred_loc, pred_label = self.network(x)

default_bbox_xy = self.default_boxes[..., :2]

default_bbox_wh = self.default_boxes[..., 2:]

pred_xy = pred_loc[..., :2] * self.prior_scaling_xy * default_bbox_wh + default_bbox_xy

pred_wh = ops.Exp()(pred_loc[..., 2:] * self.prior_scaling_wh) * default_bbox_wh

pred_xy_0 = pred_xy - pred_wh / 2.0

pred_xy_1 = pred_xy + pred_wh / 2.0

pred_xy = ops.Concat(-1)((pred_xy_0, pred_xy_1))

pred_xy = ops.Maximum()(pred_xy, 0)

pred_xy = ops.Minimum()(pred_xy, 1)

return pred_xy, pred_label

2.4 训练过程

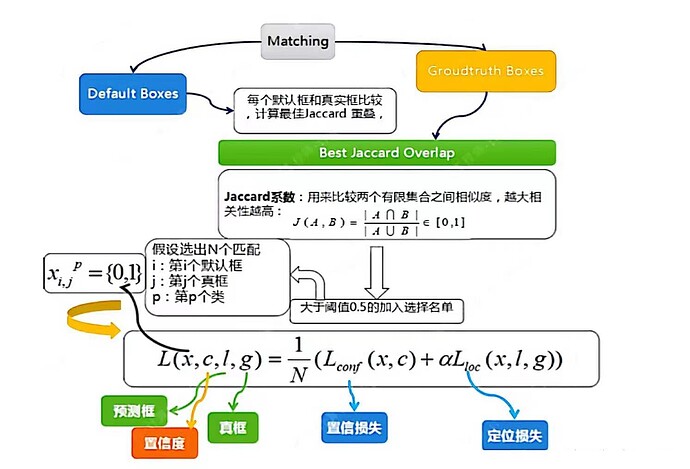

(1)先验框匹配

在训练过程中,首先要确定训练图片中的ground truth(真实目标)与哪个先验框来进行匹配,与之匹配的先验框所对应的边界框将负责预测它。

SSD的先验框与ground truth的匹配原则主要有两点:

- 对于图片中每个ground truth,找到与其IOU最大的先验框,该先验框与其匹配,这样可以保证每个ground truth一定与某个先验框匹配。通常称与ground truth匹配的先验框为正样本,反之,若一个先验框没有与任何ground truth进行匹配,那么该先验框只能与背景匹配,就是负样本。

- 对于剩余的未匹配先验框,若某个ground truth的IOU大于某个阈值(一般是0.5),那么该先验框也与这个ground truth进行匹配。

尽管一个ground truth可以与多个先验框匹配,但是ground truth相对先验框还是太少了,所以负样本相对正样本会很多。为了保证正负样本尽量平衡,SSD采用了hard negative mining,就是对负样本进行抽样,抽样时按照置信度误差(预测背景的置信度越小,误差越大)进行降序排列,选取误差的较大的top-k作为训练的负样本,以保证正负样本比例接近1:3。

注意点:

- 通常称与gt匹配的prior为正样本,反之,若某一个prior没有与任何一个gt匹配,则为负样本。

- 某个gt可以和多个prior匹配,而每个prior只能和一个gt进行匹配。

- 如果多个gt和某一个prior的IOU均大于阈值,那么prior只与IOU最大的那个进行匹配。

如上图所示,训练过程中的 prior boxes 和 ground truth boxes 的匹配,基本思路是:让每一个 prior box 回归并且到 ground truth box,这个过程的调控我们需要损失层的帮助,他会计算真实值和预测值之间的误差,从而指导学习的走向。

(2)损失函数

损失函数使用的是2.3节定义好的位置损失函数和置信度损失函数的加权和。

(3)数据增强

使用之前定义好的数据增强方式,对创建好的数据增强方式进行数据增强。

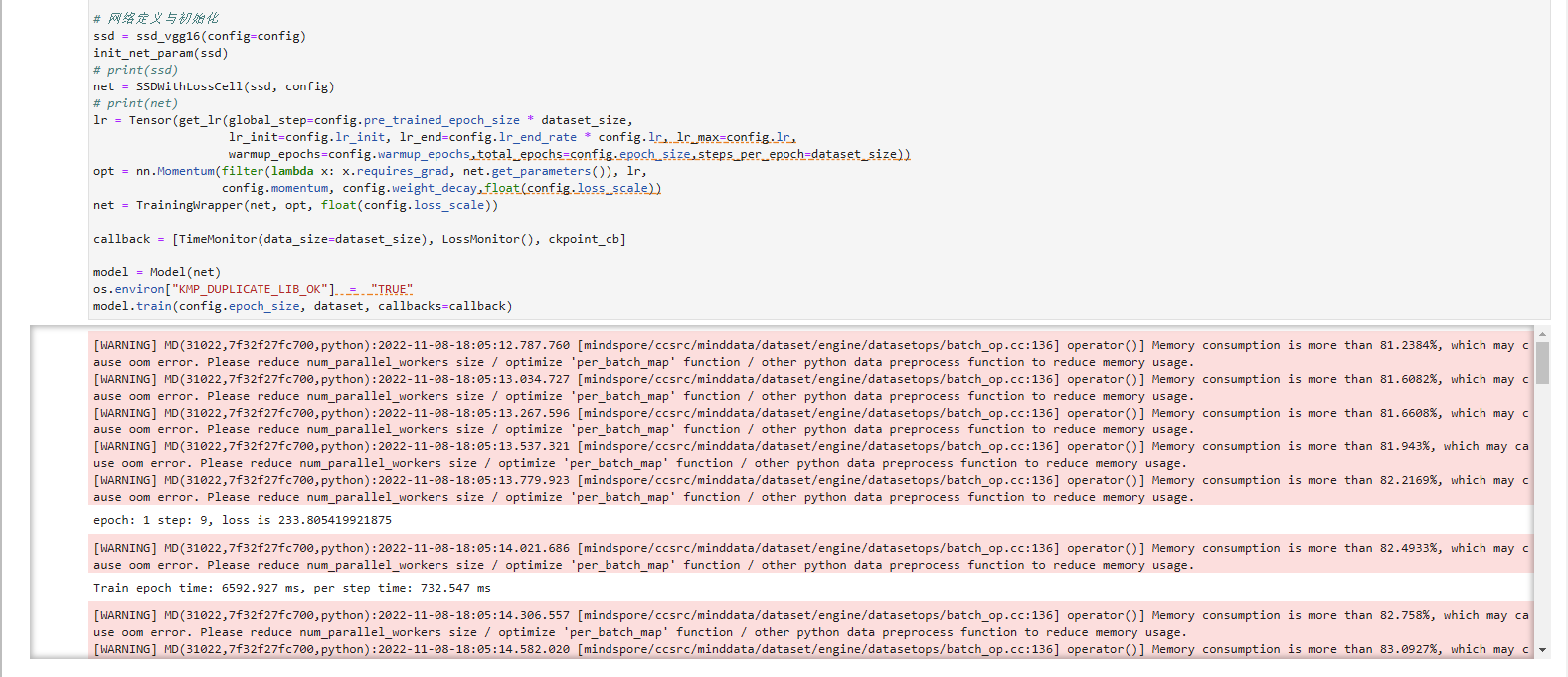

模型训练时,设置模型训练的epoch次数为60,然后通过create_ssd_dataset类创建了训练集和验证集。batch_size大小为5,图像尺寸统一调整为300×300。损失函数使用2.3节定义的位置损失函数和置信度损失函数的加权和,优化器使用Momentum,并设置初始学习率为0.001。回调函数方面使用了LossMonitor和TimeMonitor来监控训练过程中每个epoch结束后,损失值Loss的变化情况以及每个epoch、每个step的运行时间。设置每训练10个epoch保存一次模型。

import os

import math

import itertools as it

from mindspore.train import Model

from src.config import get_config

import mindspore as ms

from mindspore.train.callback import CheckpointConfig, ModelCheckpoint, LossMonitor, TimeMonitor

from src.init_params import init_net_param

from src.lr_schedule import get_lr

from mindspore.common import set_seed

class TrainingWrapper(nn.Cell):

def __init__(self, network, optimizer, sens=1.0):

super(TrainingWrapper, self).__init__(auto_prefix=False)

self.network = network

self.network.set_grad()

self.weights = ms.ParameterTuple(network.trainable_params())

self.optimizer = optimizer

self.grad = ops.GradOperation(get_by_list=True, sens_param=True)

self.sens = sens

self.hyper_map = ops.HyperMap()

def construct(self, *args):

weights = self.weights

loss = self.network(*args)

sens = ops.Fill()(ops.DType()(loss), ops.Shape()(loss), self.sens)

grads = self.grad(self.network, weights)(*args, sens)

self.optimizer(grads)

return loss

def generate_multi_levels(self, steps):

bbox_centers_list = []

bbox_corners_list = []

for step in steps:

bbox_centers, bbox_corners = self.generate(step)

bbox_centers_list.append(bbox_centers)

bbox_corners_list.append(bbox_corners)

self.bbox_centers = np.concatenate(bbox_centers_list, axis=0)

self.bbox_corners = np.concatenate(bbox_corners_list, axis=0)

return self.bbox_centers, self.bbox_corners

class GeneratDefaultBoxes():

"""

Generate Default boxes for SSD, follows the order of (W, H, archor_sizes).

`self.default_boxes` has a shape of [archor_sizes, H, W, 4], the last dimension is [y, x, h, w].

`self.default_boxes_tlbr` has a shape as `self.default_boxes`, the last dimension is [y1, x1, y2, x2].

"""

def __init__(self):

# print(config)

fk = config.img_shape[0] / np.array(config.steps)

scale_rate = (config.max_scale - config.min_scale) / (len(config.num_default) - 1)

scales = [config.min_scale + scale_rate * i for i in range(len(config.num_default))] + [1.0]

self.default_boxes = []

for idex, feature_size in enumerate(config.feature_size):

sk1 = scales[idex]

sk2 = scales[idex + 1]

sk3 = math.sqrt(sk1 * sk2)

if idex == 0 and not config.aspect_ratios[idex]:

w, h = sk1 * math.sqrt(2), sk1 / math.sqrt(2)

all_sizes = [(0.1, 0.1), (w, h), (h, w)]

else:

all_sizes = [(sk1, sk1)]

for aspect_ratio in config.aspect_ratios[idex]:

w, h = sk1 * math.sqrt(aspect_ratio), sk1 / math.sqrt(aspect_ratio)

all_sizes.append((w, h))

all_sizes.append((h, w))

all_sizes.append((sk3, sk3))

assert len(all_sizes) == config.num_default[idex]

for i, j in it.product(range(feature_size), repeat=2):

for w, h in all_sizes:

cx, cy = (j + 0.5) / fk[idex], (i + 0.5) / fk[idex]

self.default_boxes.append([cy, cx, h, w])

def to_tlbr(cy, cx, h, w):

return cy - h / 2, cx - w / 2, cy + h / 2, cx + w / 2

# For IoU calculation

self.default_boxes_tlbr = np.array(tuple(to_tlbr(*i) for i in self.default_boxes), dtype='float32')

self.default_boxes = np.array(self.default_boxes, dtype='float32')

if hasattr(config, 'use_anchor_generator') and config.use_anchor_generator:

generator = GridAnchorGenerator(config.img_shape, 4, 2, [1.0, 2.0, 0.5])

default_boxes, default_boxes_tlbr = generator.generate_multi_levels(config.steps)

else:

default_boxes_tlbr = GeneratDefaultBoxes().default_boxes_tlbr

default_boxes = GeneratDefaultBoxes().default_boxes

y1, x1, y2, x2 = np.split(default_boxes_tlbr[:, :4], 4, axis=-1)

vol_anchors = (x2 - x1) * (y2 - y1)

matching_threshold = config.match_threshold

set_seed(1)

# 自定义参数获取

config = get_config()

ms.set_context(mode=ms.GRAPH_MODE, device_target= "GPU")

# 数据加载

mindrecord_dir = os.path.join(config.data_path, config.mindrecord_dir)

mindrecord_file = os.path.join(mindrecord_dir, "ssd.mindrecord"+ "0")

dataset = create_ssd_dataset(mindrecord_file, batch_size=config.batch_size,rank=0, use_multiprocessing=True)

dataset_size = dataset.get_dataset_size()

# checkpoint

ckpt_config = CheckpointConfig(save_checkpoint_steps=dataset_size * config.save_checkpoint_epochs)

ckpt_save_dir = config.output_path + '/ckpt_{}/'.format(0)

ckpoint_cb = ModelCheckpoint(prefix="ssd", directory=ckpt_save_dir, config=ckpt_config)

# 网络定义与初始化

ssd = ssd_vgg16(config=config)

init_net_param(ssd)

# print(ssd)

net = SSDWithLossCell(ssd, config)

# print(net)

lr = Tensor(get_lr(global_step=config.pre_trained_epoch_size * dataset_size,

lr_init=config.lr_init, lr_end=config.lr_end_rate * config.lr, lr_max=config.lr,

warmup_epochs=config.warmup_epochs,total_epochs=config.epoch_size,steps_per_epoch=dataset_size))

opt = nn.Momentum(filter(lambda x: x.requires_grad, net.get_parameters()), lr,

config.momentum, config.weight_decay,float(config.loss_scale))

net = TrainingWrapper(net, opt, float(config.loss_scale))

callback = [TimeMonitor(data_size=dataset_size), LossMonitor(), ckpoint_cb]

model = Model(net)

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

model.train(config.epoch_size, dataset, callbacks=callback)

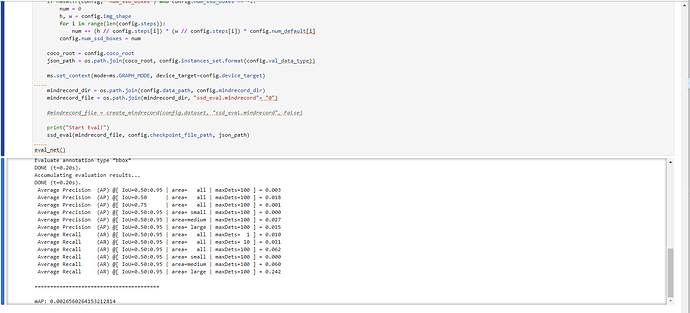

2.5 评估

自定义eval_net()类对训练好的模型进行评估,调用了上述定义的SsdInferWithDecoder类返回预测的坐标及标签,然后分别计算了在不同的IoU阈值、area和maxDets设置下的Average Precision(AP)和Average Recall(AR)。使用COCOMetrics类计算mAP。模型在测试集上的评估指标如下。

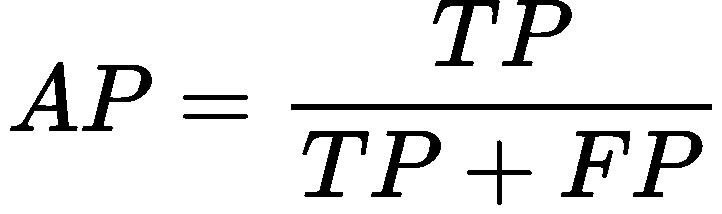

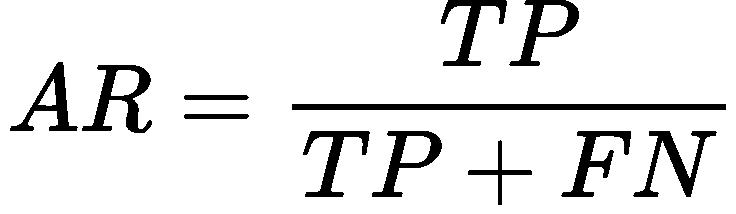

精确率(AP)和召回率(AR)的解释:

TP: IoU>设定的阈值的检测框数量(同一Ground Truth只计算一次)

FP: IoU<=设定的阈值的检测框,或者是检测到同一个GT的多余检测框的数量

FN: 没有检测到的GT的数量

精确率(AP)和召回率(AR)的公式:

精确率(Average Precision,AP):

精确率是将正样本预测正确的结果与正样本预测的结果和预测错误的结果的和的比值,主要反映出预测结果错误率。

召回率(Average Recall,AR):

召回率是正样本预测正确的结果与正样本预测正确的结果和正样本预测错误的和的比值,主要反映出来的是预测结果中的漏检率。

mAP: mean Average Precision, 即各类别AP的平均值

关于下图输出指标:

第一个值即为map值;

第二个值是iou取0.5的map值,是voc的评判标准;

第三个值是评判较为严格的map值,可以反应算法框的位置精准程度;中间几个数为物体大小的map值;

对于AR看一下maxDets=10/100的mAR值,反应检出率,如果两者接近,说明对于这个数据集来说,不用检测出100个框,可以提高性能。

import os

import mindspore as ms

from mindspore import Tensor

from src.config import get_config

config = get_config()

#print(config)

def ssd_eval(dataset_path, ckpt_path, anno_json):

"""SSD evaluation."""

batch_size = 1

ds = create_ssd_dataset(dataset_path, batch_size=batch_size,

is_training=False, use_multiprocessing=False)

net = ssd_vgg16(config=config)

net = SsdInferWithDecoder(net, Tensor(default_boxes), config)

print("Load Checkpoint!")

param_dict = ms.load_checkpoint(ckpt_path)

net.init_parameters_data()

ms.load_param_into_net(net, param_dict)

net.set_train(False)

total = ds.get_dataset_size() * batch_size

print("\n========================================\n")

print("total images num: ", total)

print("Processing, please wait a moment.")

eval_param_dict = {"net": net, "dataset": ds, "anno_json": anno_json}

mAP = apply_eval(eval_param_dict)

print("\n========================================\n")

print(f"mAP: {mAP}")

def eval_net():

if hasattr(config, 'num_ssd_boxes') and config.num_ssd_boxes == -1:

num = 0

h, w = config.img_shape

for i in range(len(config.steps)):

num += (h // config.steps[i]) * (w // config.steps[i]) * config.num_default[i]

config.num_ssd_boxes = num

coco_root = config.coco_root

json_path = os.path.join(coco_root, config.instances_set.format(config.val_data_type))

ms.set_context(mode=ms.GRAPH_MODE, device_target=config.device_target)

mindrecord_dir = os.path.join(config.data_path, config.mindrecord_dir)

mindrecord_file = os.path.join(mindrecord_dir, "ssd_eval.mindrecord"+ "0")

#mindrecord_file = create_mindrecord(config.dataset, "ssd_eval.mindrecord", False)

print("Start Eval!")

ssd_eval(mindrecord_file, config.checkpoint_file_path, json_path)

eval_net()

参考

[1] Liu W, Anguelov D, Erhan D, et al. Ssd: Single shot multibox detector[C]//European conference on computer vision. Springer, Cham, 2016: 21-37.

[2] http://t.csdn.cn/zycqp

[3] http://t.csdn.cn/rgoWz

[4] https://zhuanlan.zhihu.com/p/79854543

[5] https://zhuanlan.zhihu.com/p/33544892

[6] https://zhuanlan.zhihu.com/p/40968874

[7] https://blog.csdn.net/wind82465/article/details/118893589、https://blog.csdn.net/ThomasCai001/article/details/120097650