1 系统环境

硬件环境(Ascend/GPU/CPU): Ascend/GPU/CPU

MindSpore版本: mindspore=2.0.0-rc1

执行模式(PyNative/ Graph):不限

Python版本: Python=3.9.13

操作系统平台: 不限

2 报错信息

2.1 问题描述

执行代码为将一个形状为(300,400)的随机张量转换为(200,210,500,2,5)的张量(缺少的部分使用pad填充)。使用pytorch和mindspore各自实现了一套转换的函数,pytorch可以正常执行成功,mindspore执行失败

2.2 脚本代码(代码格式,可上传附件)

import mindspore.nn

import torch

import numpy as np

import mindspore as ms

def torch_output_fix(input_data, target_shape):

r"""

:param input_data:

:param target_shape:

:return: input_data in target shape

example:

data = torch.tensor(np.random.randn(1, 3, 32, 32))

output_data = output_fix(data, target_shape=[1, 64, 224, 224])

"""

# print(input_data.shape)

# print(target_shape)

flatten = torch.nn.Flatten()

elements = get_element_nums(input_data)

b1 = input_data.shape[0]

s1 = elements // b1

target_elements = 1

for shape in target_shape:

target_elements *= shape

b2 = target_shape[0]

s2 = target_elements // b2

det = elements - target_elements

if det == 0:

return torch.reshape(input_data, target_shape)

x1 = s2 - s1

x2 = b2 - b1

out = flatten(input_data)

if x1 > 0:

out = torch.nn.functional.pad(out, (0, x1))

elif x1 < 0:

out = torch.index_select(out, dim=-1,

index=torch.tensor([i for i in range(s2)], dtype=torch.int64))

if x2 > 0:

out = torch.transpose(out, dim0=1, dim1=0)

out = torch.nn.functional.pad(out, (0, x2))

out = torch.transpose(out, dim1=1, dim0=0)

elif x2 < 0:

out = torch.index_select(out, dim=0,

index=torch.tensor([i for i in range(b2)], dtype=torch.int64))

return torch.reshape(out, target_shape)

def ms_output_fix(input_data, target_shape):

flatten = mindspore.nn.Flatten()

elements = get_element_nums(input_data)

b1 = input_data.shape[0]

s1 = elements // b1

target_elements = 1

for shape in target_shape:

target_elements *= shape

b2 = target_shape[0]

s2 = target_elements // b2

det = elements - target_elements

x1 = s2 - s1

x2 = b2 - b1

if det == 0:

return ms.ops.Reshape()(input_data, tuple(target_shape))

out = flatten(input_data)

if x1 > 0:

out = ms.nn.Pad(paddings=((0, 0), (0, x1)))(out)

elif x1 < 0:

out = ms.ops.Slice()(out, (0, 0), (-1, s2))

if x2 > 0:

out = ms.nn.Pad(paddings=((x2, 0), (0, 0)))(out)

elif x2 < 0:

out = ms.ops.Slice()(out, (0, 0), (b2, -1))

return ms.ops.Reshape()(out, tuple(target_shape))

def get_element_nums(data):

if isinstance(data, tuple):

data = list(tuple)

shape = data.shape

res = 1

for i in shape:

res *= i

return res

if __name__ == '__main__':

input_size,output_size=(300,400),(200, 210, 500,2,5)

torch_data = torch.tensor(np.random.randn(*input_size))

print(torch_output_fix(torch_data, target_shape=output_size).shape)

ms_data = ms.Tensor(np.random.randn(*input_size))

print(ms_output_fix(ms_data, target_shape=output_size).shape)

3 根因分析

首先简化问题复现,出问题在nn.Pad, 然后就是参数和输入shape。

import mindspore

from mindspore import Tensor, nn, ops

import numpy as np

class Net(nn.Cell):

def __init__(self):

super(Net, self).__init__()

self.pad = nn.Pad(paddings=((0, 0), (0, 1049600)))

def construct(self, x):

return self.pad(x)

x = Tensor(np.random.randn(300, 400))

pad = Net()

output = pad(x)

print(output)

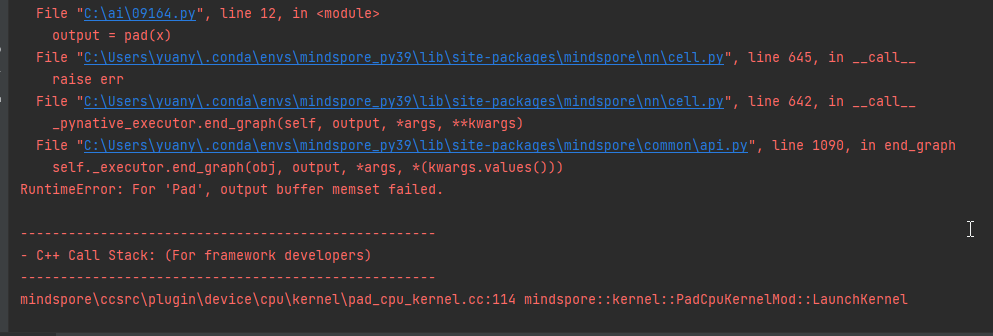

报错

For 'Pad', output buffer memset failed

mindspore\ccsrc\plugin\device\cpu\kernel\pad_cpu_kernel.cc:114 mindspore::kernel::PadCpuKernelMod::LaunchKernel

auto *outputs_addr = reinterpret_cast<T *>(outputs[0]->addr);

if (memset_s(outputs_addr, outputs[0]->size, 0, outputs[0]->size) != EOK) {

MS_LOG(EXCEPTION) << "For '" << kernel_name_ << "', output buffer memset failed.";

}

采用了安全函数memset_s ,内存操作是有限制的,代码里面的值已经超出了范围,所以会报错。

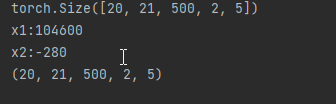

4 解决方案

减小output的值

input_size,output_size=(300,400),(20, 21, 500,2,5)

执行成功。