之前在做一个手写字符的识别时遇到的问题,在此记录下详细的过程。

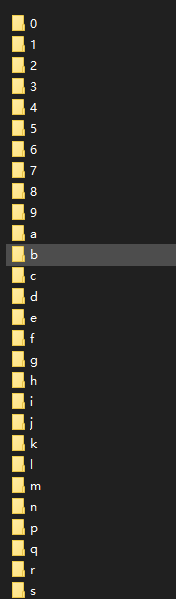

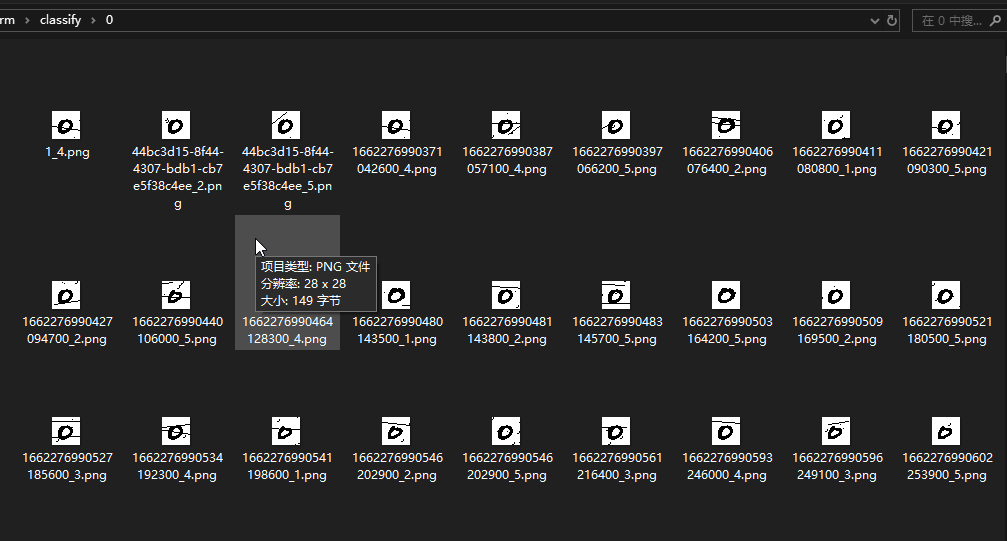

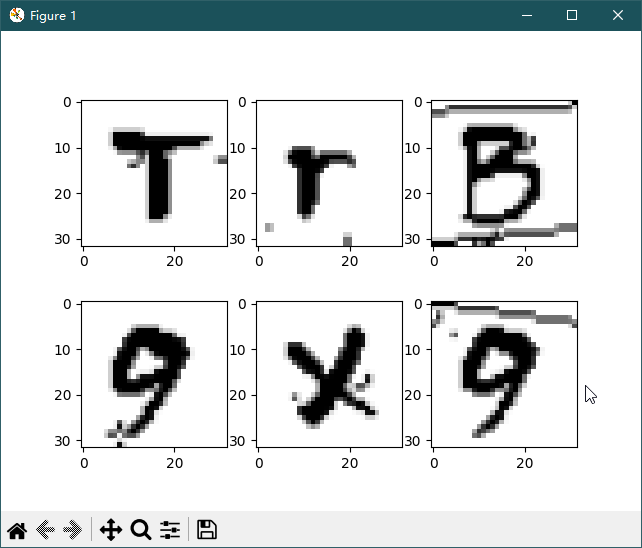

数据集如下:

36个文件夹,每个文件夹下3000个图片。

文件夹命名就是里面字符的实际label。

图像分辨率28*28,位深度 1 ,也就是单色图像。

如下代码是创建数据集的。

def create_dataset(path, batch_size=32):

dataset = ds.ImageFolderDataset(path,

num_parallel_workers=8,

class_indexing={"0": 0, "1": 1, "2": 2, "3": 3, "4": 4, "5": 5,

"6": 6, "7": 7, "8": 8, "9": 9, "a": 10, "b": 11,

"c": 12, "d": 13, "e": 14, "f": 15, "g": 16, "h": 17,

"i": 18, "j": 19, "k": 20, "l": 21, "m": 22, "n": 23,

"p": 24, "q": 25, "r": 26, "s": 27, "t": 28, "u": 29,

"v": 30, "w": 31, "x": 32, "y": 33, "z": 34, "space": 35})

rescale = 1.0 / 255.0

shift = 0.0

rescale_nml = 1 / 0.3081

shift_nml = -1 * 0.1307 / 0.3081

# define map operations

trans = [

transforms.Decode(),

transforms.Resize(size=32, interpolation=Inter.LINEAR),

transforms.Rescale(rescale, shift),

transforms.Rescale(rescale_nml, shift_nml),

transforms.HWC2CHW(),

]

dataset = dataset.map(operations=trans, input_columns="image", num_parallel_workers=8)

dataset = dataset.batch(batch_size, drop_remainder=True)

return dataset

数据集创建没什么问题,接下来就是放进网络里面进行训练了。

首先是创建网络,这个代码直接拿样例里面的来用就可以了。

class LeNet5(nn.Cell):

"""

LeNet-5网络结构

"""

def __init__(self, num_class=10, num_channel=1):

super(LeNet5, self).__init__()

# 卷积层,输入的通道数为num_channel,输出的通道数为6,卷积核大小为5*5

self.conv1 = nn.Conv2d(num_channel, 6, 5, pad_mode='valid')

# 卷积层,输入的通道数为6,输出的通道数为16,卷积核大小为5*5

self.conv2 = nn.Conv2d(6, 16, 5, pad_mode='valid')

# 全连接层,输入个数为16*5*5,输出个数为120

self.fc1 = nn.Dense(16 * 5 * 5, 120)

# 全连接层,输入个数为120,输出个数为84

self.fc2 = nn.Dense(120, 84)

# 全连接层,输入个数为84,分类的个数为num_class

self.fc3 = nn.Dense(84, num_class)

# ReLU激活函数

self.relu = nn.ReLU()

self.relu2 = vmap(nn.ReLU(), in_axes=0, out_axes=0)

# 池化层

self.max_pool2d = nn.MaxPool2d(kernel_size=2, stride=2)

# 多维数组展平为一维数组

self.flatten = nn.Flatten()

def construct(self, x):

# 使用定义好的运算构建前向网络

x = self.conv1(x)

x = self.relu(x)

x = self.max_pool2d(x)

x = self.conv2(x)

x = self.relu(x)

x = self.max_pool2d(x)

x = self.flatten(x)

x = self.fc1(x)

x = self.relu(x)

x = self.fc2(x)

x = self.relu(x)

x = self.fc3(x)

x = self.relu2(x)

return x

network = LeNet5(num_class=36)

定义完网络后就是定义损失函数。

net_loss = nn.SoftmaxCrossEntropyWithLogits(sparse=True, reduction='mean')

然后定义优化器函数。

net_opt = nn.Momentum(network.trainable_params(), learning_rate=0.01, momentum=0.9)

设置模型保存参数,模型训练保存参数的step为1875。

config_ck = CheckpointConfig(save_checkpoint_steps=1875, keep_checkpoint_max=10)

应用模型保存参数。

ckpoint = ModelCheckpoint(prefix="lenet", directory="./test", config=config_ck)

然后就是初始化模型参数。

model = ms.Model(network, loss_fn=net_loss, optimizer=net_opt, metrics={'accuracy'})

最后一步就是训练网络模型,并保存为lenet-1_1875.ckpt文件

model.train(30, dataset_train, callbacks=[ckpoint, LossMonitor(0.01, 1875)])

到这里所有代码都写完了,然后就可以开始训练了。

[WARNING] DEVICE(,40c4,?):2023-6-10 19:10:0 [mindspore\ccsrc\runtime\pynative\async\async_queue.cc:66] mindspore::pynative::AsyncQueue::WorkerLoop] Run task failed, error msg:For 'Conv2D', 'C_in' of input 'x' shape divide by parameter 'group' must be equal to 'C_in' of input 'weight' shape: 1, but got 'C_in' of input 'x' shape: 3, and 'group': 1.

----------------------------------------------------

- C++ Call Stack: (For framework developers)

----------------------------------------------------

mindspore\core\ops\conv2d.cc:241 mindspore::ops::`anonymous-namespace'::Conv2dInferShape

Traceback (most recent call last):

File "D:\ai\step1\form\mylenet.py", line 102, in <module>

model.train(30, dataset_train, callbacks=[ckpoint, LossMonitor(0.01, 1875)])

File "D:\python3.9\lib\site-packages\mindspore\train\model.py", line 1056, in train

self._train(epoch,

File "D:\python3.9\lib\site-packages\mindspore\train\model.py", line 100, in wrapper

func(self, *args, **kwargs)

File "D:\python3.9\lib\site-packages\mindspore\train\model.py", line 610, in _train

self._train_process(epoch, train_dataset, list_callback, cb_params, initial_epoch, valid_infos)

File "D:\python3.9\lib\site-packages\mindspore\train\model.py", line 909, in _train_process

outputs = self._train_network(*next_element)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 661, in __call__

raise err

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 657, in __call__

output = self._run_construct(args, kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 445, in _run_construct

output = self.construct(*cast_inputs, **kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\wrap\cell_wrapper.py", line 386, in construct

loss = self.network(*inputs)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 661, in __call__

raise err

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 657, in __call__

output = self._run_construct(args, kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 445, in _run_construct

output = self.construct(*cast_inputs, **kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\wrap\cell_wrapper.py", line 117, in construct

out = self._backbone(data)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 661, in __call__

raise err

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 657, in __call__

output = self._run_construct(args, kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 445, in _run_construct

output = self.construct(*cast_inputs, **kwargs)

File "D:\ai\step1\form\mylenet.py", line 40, in construct

x = self.max_pool2d(x)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 661, in __call__

raise err

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 657, in __call__

output = self._run_construct(args, kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\cell.py", line 445, in _run_construct

output = self.construct(*cast_inputs, **kwargs)

File "D:\python3.9\lib\site-packages\mindspore\nn\layer\pooling.py", line 562, in construct

if x.ndim == 3:

File "D:\python3.9\lib\site-packages\mindspore\common\_stub_tensor.py", line 122, in ndim

return len(self.shape)

File "D:\python3.9\lib\site-packages\mindspore\common\_stub_tensor.py", line 90, in shape

self.stub_shape = self.stub.get_shape()

RuntimeError: For 'Conv2D', 'C_in' of input 'x' shape divide by parameter 'group' must be equal to 'C_in' of input 'weight' shape: 1, but got 'C_in' of input 'x' shape: 3, and 'group': 1.

----------------------------------------------------

- C++ Call Stack: (For framework developers)

----------------------------------------------------

mindspore\core\ops\conv2d.cc:241 mindspore::ops::`anonymous-namespace'::Conv2dInferShape

Process finished with exit code -1073741819 (0xC0000005)

上来就是运行报错,报错的具体信息是

RuntimeError: For 'Conv2D', 'C_in' of input 'x' shape divide by parameter 'group' must be equal to 'C_in' of input 'weight' shape: 1, but got 'C_in' of input 'x' shape: 3, and 'group': 1.

然后从打印出来的堆栈里面往上翻,找到自己写的代码行里面。

File "D:\ai\step1\form\mylenet.py", line 40, in construct

x = self.max_pool2d(x)

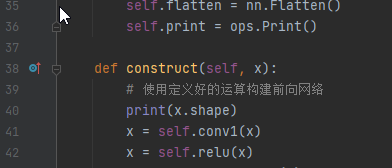

def construct(self, x):

# 使用定义好的运算构建前向网络

x = self.conv1(x)

x = self.relu(x)

x = self.max_pool2d(x)

x = self.conv2(x)

网络在x = self.conv1(x)报错了,报错信息里面是’Conv2D’这个算子。所以问题就在这里了。

但是为什么堆栈里面出错是在x = self.max_pool2d(x)呢?这个有人能回答下吗?

先看报错信息具体什么意思先。

'C_in' of input 'x' shape divide by parameter 'group' must be equal to 'C_in' of input 'weight' shape: 1, but got 'C_in' of input 'x' shape: 3, and 'group': 1.

大概意思是x的C_in的shape 除参数group应该等于输入weight的C_in的shape 1,但是实际输入x的C_in 的shape 3,group 1,两者相除等于3,就和weight的shape 1不一致了。

所以报错了。

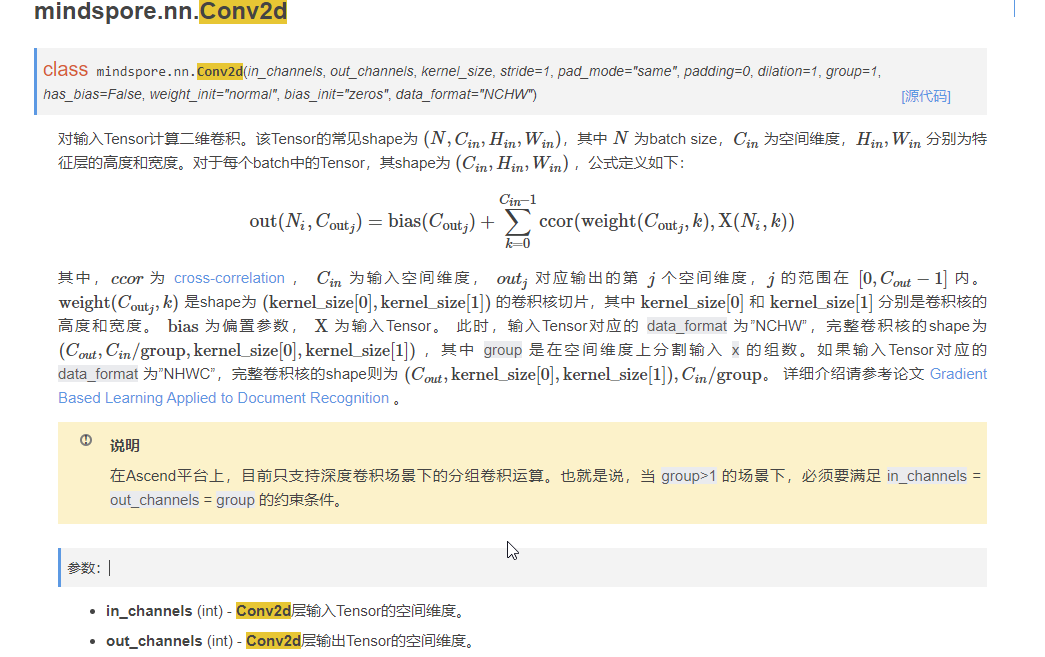

这个报错感觉像个绕口令,还是先看下API文档怎么写的吧.

输入Tensor的shape为 (N,Cin,Hin,Win)

self.conv1 = nn.Conv2d(num_channel, 6, 5, pad_mode=‘valid’)

在conv1前面加打印,打印输入的shape

(32, 3, 32, 32)输入的C_in是3

num_channel=1这个就是不一致的地方了。

但是图像就是1位的,怎么变成了3位的呢?

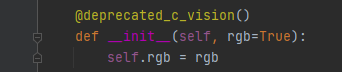

那就看下数据读取时的处理了。

transforms.Decode()

好家伙,默认rgb=True。

改成False试试。

RuntimeError: Exception thrown from dataset pipeline. Refer to 'Dataset Pipeline Error Message'.

------------------------------------------------------------------

- Dataset Pipeline Error Message:

------------------------------------------------------------------

[ERROR] map operation: [Decode] failed. The corresponding data file is: D:\ai\step1\form\classify\0\1662618914169255200_3.png. Decode: only support Decoded into RGB image, check input parameter 'rgb' first, its value should be 'True'.

------------------------------------------------------------------

- C++ Call Stack: (For framework developers)

------------------------------------------------------------------

mindspore\ccsrc\minddata\dataset\kernels\image\decode_op.cc(48).

额, 只支持RGB。

那只能修改网络的num_channel了。

def __init__(self, num_class=10, num_channel=3):

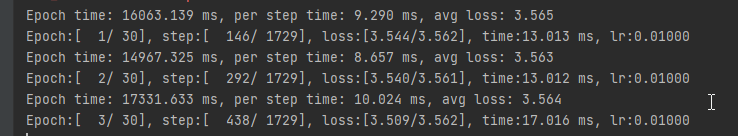

修改之后就能正常跑下去了。

本次调试到此结束。

遗留的问题就是明明是x = self.conv1(x) 报的错为什么堆栈打到了x = self.max_pool2d(x)。还有就是Conv2D的报错信息不清晰。