1 系统环境

- 硬件环境(Ascend/GPU/CPU): Ascend/GPU/CPU

- MindSpore版本: mindspore=1.9.0

- 执行模式(动态图):GRAPH/PYNATIVE

- Python版本: Python=3.9

- 操作系统平台: win10

2 报错信息

Traceback (most recent call last):

File "D:lailrw.py", line 35,in <module>

main()

File "D:lailrw.py", line 30, in main

(loss, logits, extra_output),inputs_gradient = grad_fn(x, y, labels)

File "D: \python3.9\liblsite-packages\mindspore\commonlapi.py",line 594, in staging_specialize

out = _MindsporeFunctionExecutor(func, hash_obj, input_signature,process_obj, jit_config)(*args)

File "D: \python3.9\liblsite-packages\mindsporelcommonlapi.py", line 98, in wrapper

results = fn(*arg, **kwargs)

File "D:\python3.9\liblsite-packagles\mindsporelcommonlapi.py",line 405, in -_call_

phase = self.compile(args_list, self.fn.-_name-

File "D: \python3.9\liblsite-packages\mindspore\commonlapi.py",line 379, in compile

is_compile = self._graph_executor.compile(self.fn, compile_args, phase, True)

TypeError: Type Join Failed: dtype1 = Float32, dtype2 = Intó4.

For more details, please refer to https://www.mindspore.cn/search?inputValue=Type%20Join%20Failed

Inner Message:

This: AbstractScalar(Type: FLoat32, VaLue: AnyValue, Shape: Noshape), other:AbstractScalar(Type: Intó4, Value: AnyValue, Shape: NoShape). Please check the node

The function call stack:

In file D:\python3.9\liblsite-packages\mindsporelops\compositelbase.py:527 return grad_(fn, weights)(*args)/

----------------------------------------------------------

- C++ Call Stack: (For frameworkdevelopers)

----------------------------------------------------------

mindspore\corelabstractlabstract_value.cc:65 TypeJoinLogging

2.1 问题描述

It raised TypeError: Type Join Failed: dtype1 = Float32, dtype2 = Int64.

2.2 脚本代码

import numpy as np

import mindspore as ms

import mindspore.ops as ops

from mindspore import Tensor, nn, context

class Graph(nn.Cell):

def __init__(self):

super().__init__()

self.net = nn.Dense(10, 1)

def construct(self, x, y):

logits = self.net(x)

extra_output = ops.nonzero(y > 0)

return logits, extra_output

def main():

context.set_context(mode=context.GRAPH_MODE)

net = Graph()

x = Tensor(np.random.randn(16, 10), dtype=ms.float32)

y = Tensor([0, 0, 1, 1])

labels = Tensor(np.random.randn(16, 1), dtype=ms.float32)

logit, extra_output = net(x, y)

assert extra_output.shape == (2, 1)

loss_fn = nn.MSELoss()

def forward(x, y, labels):

logits, extra_output = net(x, y)

loss = loss_fn(logits, labels)

return loss, logits, extra_output

grad_fn = ops.value_and_grad(forward, grad_position=None, weights=net.trainable_params(), has_aux=True)

# raised TypeError here

(loss, logits, extra_output), inputs_gradient = grad_fn(x, y, labels)

assert extra_output.shape == (2, 1)

if __name__ == '__main__':

main()

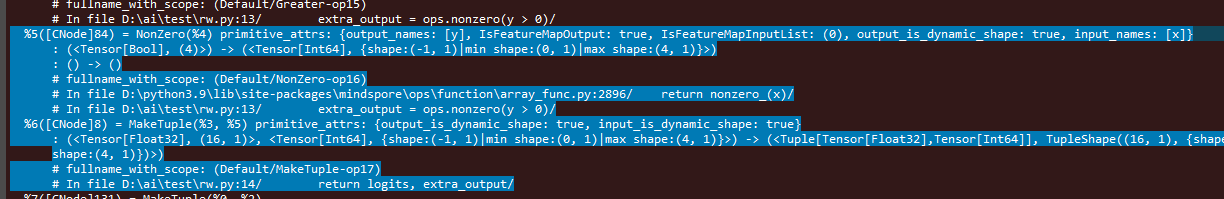

3 根因分析

-

value_and_grad 是同时计算网络的正向输出和梯度。Graph网络输出是一个tuple,(logits, extra_output)

- logits是nn.Dense的输出结果,类型float32。

- extra_output是ops.nonzero的输出结果,类型int64。

-

梯度计算时由于MindSpore采用的是反向自动微分机制, 会对输出结果求和后再对输入求导。

- 当输出类型不一致的情况下就会报错。

4 解决方案

- 两种方法:

- 1.把nonzero算子的返回值转换成float32,转换的算子是Cast。

- 2.使用返回值是float32的算子。

- 比如ops.count_nonzero

- 第1种方案:

import numpy as np

import mindspore as ms

import mindspore.ops as ops

from mindspore import Tensor, nn, context

class Graph(nn.Cell):

def __init__(self):

super().__init__()

self.net = nn.Dense(10, 1)

self.cast = ops.Cast()

def construct(self, x, y):

logits = self.net(x)

extra_output = ops.nonzero(y > 0)

extra_output = self.cast(extra_output, ms.float32)

return logits, extra_output

def main():

context.set_context(mode=context.GRAPH_MODE)

net = Graph()

x = Tensor(np.random.randn(16, 10), dtype=ms.float32)

y = Tensor([0, 0, 1, 1])

labels = Tensor(np.random.randn(16, 1), dtype=ms.float32)

logit, extra_output = net(x, y)

print(extra_output.shape)

print(extra_output)

assert extra_output.shape == (2, 1)

loss_fn = nn.MSELoss()

def forward(x, y, labels):

logits, extra_output = net(x, y)

loss = loss_fn(logits, labels)

return loss, logits, extra_output

grad_fn = ops.value_and_grad(forward, grad_position=None, weights=net.trainable_params(), has_aux=True)

# raised TypeError here

(loss, logits, extra_output), inputs_gradient = grad_fn(x, y, labels)

assert extra_output.shape == (2, 1)

if __name__ == '__main__':

main()

- 第2种方案:

import numpy as np

import mindspore as ms

import mindspore.ops as ops

from mindspore import Tensor, nn, context

class Graph(nn.Cell):

def __init__(self):

super().__init__()

self.net = nn.Dense(10, 1)

def construct(self, x, y):

logits = self.net(x)

extra_output = ops.count_nonzero(x=y, keep_dims=True, dtype=ms.float32)

return logits, extra_output

def main():

context.set_context(mode=context.GRAPH_MODE)

net = Graph()

x = Tensor(np.random.randn(16, 10), dtype=ms.float32)

y = Tensor([0, 0, 1, 1])

labels = Tensor(np.random.randn(16, 1), dtype=ms.float32)

logit, extra_output = net(x, y)

# assert extra_output.shape == (2, 1)

loss_fn = nn.MSELoss()

def forward(x, y, labels):

logits, extra_output = net(x, y)

loss = loss_fn(logits, labels)

return loss, logits, extra_output

grad_fn = ops.value_and_grad(forward, grad_position=None, weights=net.trainable_params(), has_aux=True)

# raised TypeError here

(loss, logits, extra_output), inputs_gradient = grad_fn(x, y, labels)

# assert extra_output.shape == (2, 1)

if __name__ == '__main__':

main()

最终可以正常执行。