我在将torch架构下的模型,迁移到mindspore架构下的时候遇到的问题。

这是torch的代码:

class GELUConvBlock(nn.Module):

def __init__(

self, in_ch, out_ch, group_size):

super().__init__()

self.conv = nn.Conv2d(in_ch, out_ch, 3, 1, 1)

self.group_norm = nn.GroupNorm(group_size, out_ch)

self.gelu = nn.GELU()

def forward(self, x):

x = self.conv(x)

print(x)

x = self.group_norm(x)

x = self.gelu(x)

return x

固定了nn.conv2d的权重。

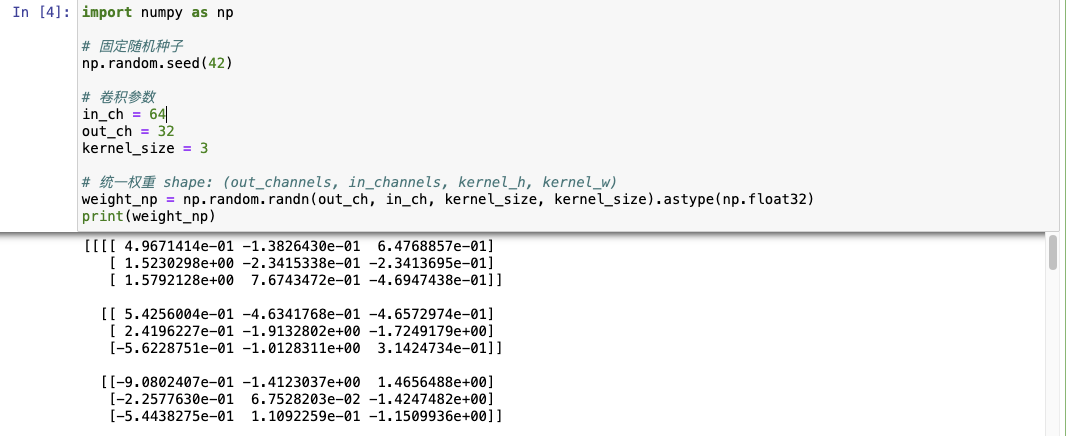

import numpy as np

# 固定随机种子

np.random.seed(42)

# 卷积参数

in_ch = 64

out_ch = 32

kernel_size = 3

# 统一权重 shape: (out_channels, in_channels, kernel_h, kernel_w)

weight_np = np.random.randn(out_ch, in_ch, kernel_size, kernel_size).astype(np.float32)

print(weight_np)

得到权重如下

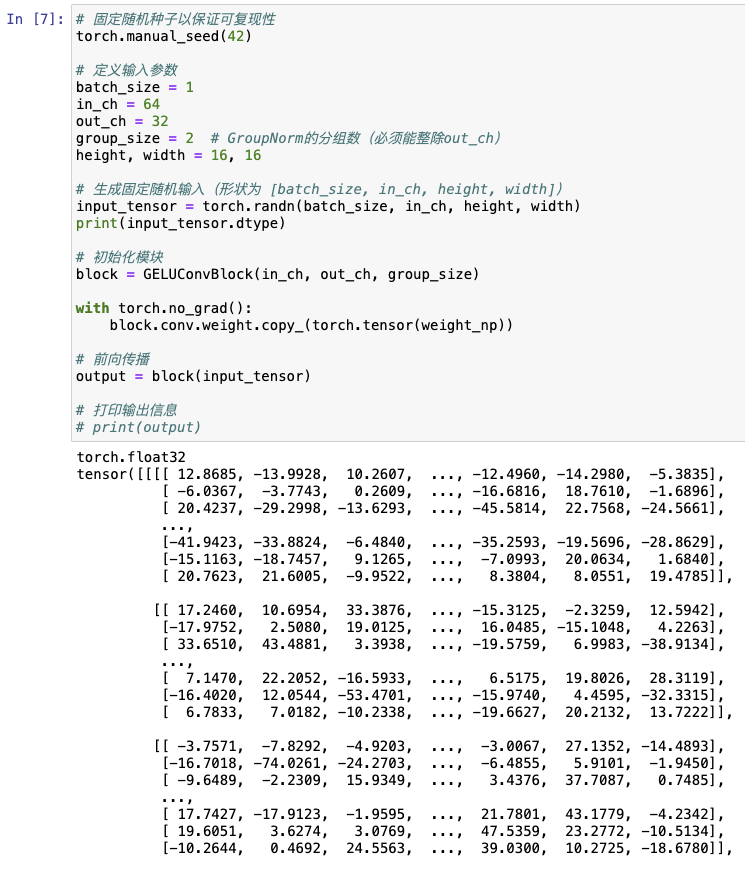

之后固定了输入得到经过卷积后的输出,代码和输出如下:

# 固定随机种子以保证可复现性

torch.manual_seed(42)

# 定义输入参数

batch_size = 1

in_ch = 64

out_ch = 32

group_size = 2 # GroupNorm的分组数(必须能整除out_ch)

height, width = 16, 16

# 生成固定随机输入(形状为 [batch_size, in_ch, height, width])

input_tensor = torch.randn(batch_size, in_ch, height, width)

print(input_tensor.dtype)

# 初始化模块

block = GELUConvBlock(in_ch, out_ch, group_size)

with torch.no_grad():

block.conv.weight.copy_(torch.tensor(weight_np))

# 前向传播

output = block(input_tensor)

# 打印输出信息

# print(output)

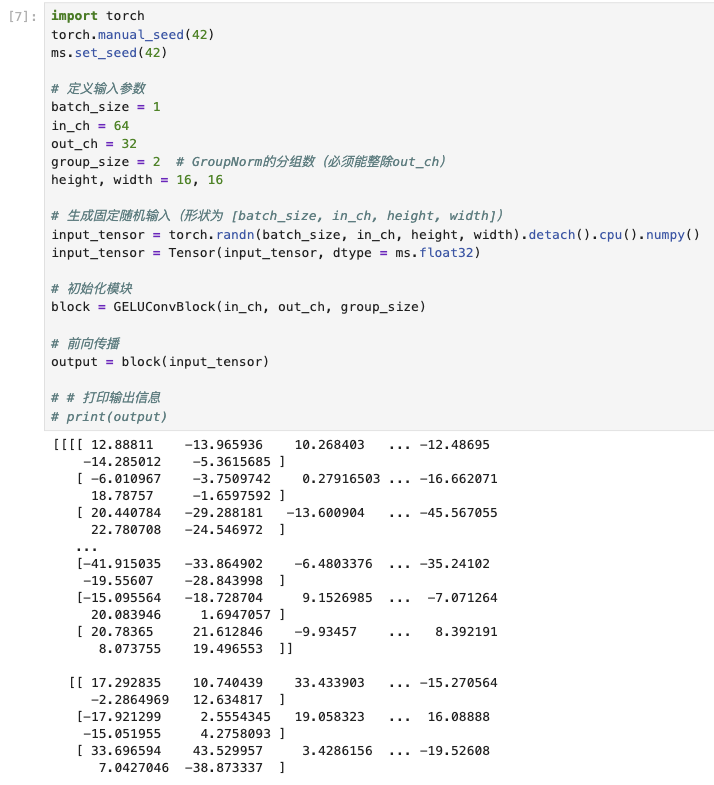

之后转到mindspore上,PYNATIVE_MODE模式下用NPU调试代码

依旧固定初始化参数和输入

class GELUConvBlock(nn.Cell):

def __init__(self, in_ch, out_ch, group_size):

super().__init__()

self.conv = nn.Conv2d(in_ch,

out_ch,

3,

stride=1,

has_bias=False,

padding=1,

pad_mode='pad',

dtype=ms.float32)

self.conv.weight.set_data(Tensor(weight_np, dtype=ms.float32)) # 强制初始化参数

self.group_norm = nn.GroupNorm(group_size, out_ch)

self.gelu = nn.GELU(approximate=False)

def construct(self, x):

x = self.conv(x)

print(x)

x = self.group_norm(x)

x = self.gelu(x)

return x

在经过卷积层处理

import torch

torch.manual_seed(42)

ms.set_seed(42)

# 定义输入参数

batch_size = 1

in_ch = 64

out_ch = 32

group_size = 2 # GroupNorm的分组数(必须能整除out_ch)

height, width = 16, 16

# 生成固定随机输入(形状为 [batch_size, in_ch, height, width])

input_tensor = torch.randn(batch_size, in_ch, height, width).detach().cpu().numpy()

input_tensor = Tensor(input_tensor, dtype = ms.float32)

# 初始化模块

block = GELUConvBlock(in_ch, out_ch, group_size)

# 前向传播

output = block(input_tensor)

# # 打印输出信息

# print(output)

得到结果如下

误差在10的-2次方,模型后面还会用到大量的卷积,叠加之后误差会更大。

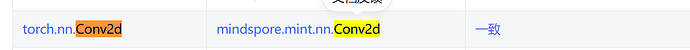

我该如何对齐和torch.nn.Conv2d的精度呢?

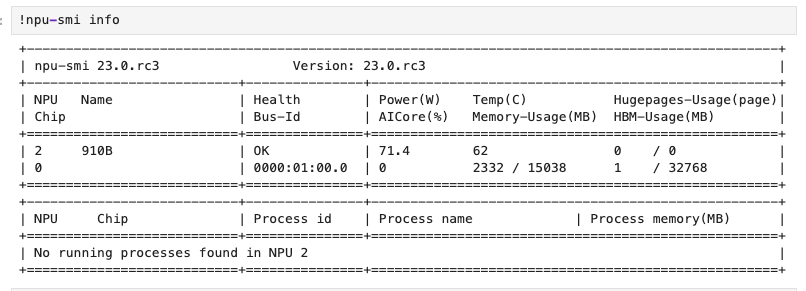

另外:

mindspore 2.2.14

torch 2.7.1