/home/HwHiAiUser/.local/lib/python3.9/site-packages/numpy/core/getlimits.py:549: UserWarning: The value of the smallest subnormal for <class ‘numpy.float64’> type is zero.

setattr(self, word, getattr(machar, word).flat[0])

/home/HwHiAiUser/.local/lib/python3.9/site-packages/numpy/core/getlimits.py:89: UserWarning: The value of the smallest subnormal for <class ‘numpy.float64’> type is zero.

return self._float_to_str(self.smallest_subnormal)

/home/HwHiAiUser/.local/lib/python3.9/site-packages/numpy/core/getlimits.py:549: UserWarning: The value of the smallest subnormal for <class ‘numpy.float32’> type is zero.

setattr(self, word, getattr(machar, word).flat[0])

/home/HwHiAiUser/.local/lib/python3.9/site-packages/numpy/core/getlimits.py:89: UserWarning: The value of the smallest subnormal for <class ‘numpy.float32’> type is zero.

return self._float_to_str(self.smallest_subnormal)

[WARNING] ME(8134:255086668574752,MainProcess):2025-09-04-16:22:35.406.389 [mindspore/context.py:1402] For ‘context.set_context’, the parameter ‘ascend_config’ will be deprecated and removed in a future version. Please use the api mindspore.device_context.ascend.op_precision.precision_mode(),

mindspore.device_context.ascend.op_precision.op_precision_mode(),

mindspore.device_context.ascend.op_precision.matmul_allow_hf32(),

mindspore.device_context.ascend.op_precision.conv_allow_hf32(),

mindspore.device_context.ascend.op_tuning.op_compile() instead.

Traceback (most recent call last):

File “/home/HwHiAiUser/mindnlp/llm/inference/janus_pro/understanding.py”, line 4, in

from mindnlp.transformers import AutoModelForCausalLM

File “/home/HwHiAiUser/mindnlp/mindnlp/init.py”, line 51, in

from mindnlp import transformers

File “/home/HwHiAiUser/mindnlp/mindnlp/transformers/init.py”, line 16, in

from . import models, pipelines

File “/home/HwHiAiUser/mindnlp/mindnlp/transformers/models/init.py”, line 19, in

from . import (

File “/home/HwHiAiUser/mindnlp/mindnlp/transformers/models/albert/init.py”, line 16, in

from . import tokenization_albert, tokenization_albert_fast, configuration_albert, modeling_albert

File “/home/HwHiAiUser/mindnlp/mindnlp/transformers/models/albert/modeling_albert.py”, line 28, in

from mindnlp.core import nn, ops

File “/home/HwHiAiUser/mindnlp/mindnlp/core/init.py”, line 16, in

from . import optim, ops, nn, distributions

File “/home/HwHiAiUser/mindnlp/mindnlp/core/optim/init.py”, line 2, in

from .optimizer import Optimizer

File “/home/HwHiAiUser/mindnlp/mindnlp/core/optim/optimizer.py”, line 28, in

from mindnlp.core.nn import Parameter

File “/home/HwHiAiUser/mindnlp/mindnlp/core/nn/init.py”, line 16, in

from . import utils, functional, init

File “/home/HwHiAiUser/mindnlp/mindnlp/core/nn/utils/init.py”, line 2, in

from . import parametrizations

File “/home/HwHiAiUser/mindnlp/mindnlp/core/nn/utils/parametrizations.py”, line 9, in

from …nn.modules import Module

File “/home/HwHiAiUser/mindnlp/mindnlp/core/nn/modules/init.py”, line 4, in

from .linear import Linear, Identity

File “/home/HwHiAiUser/mindnlp/mindnlp/core/nn/modules/linear.py”, line 11, in

from … import ops

File “/home/HwHiAiUser/mindnlp/mindnlp/core/ops/init.py”, line 7, in

from .creation import *

File “/home/HwHiAiUser/mindnlp/mindnlp/core/ops/creation.py”, line 4, in

from mindspore._c_expression import Tensor as CTensor # pylint: disable=no-name-in-module, import-error

ImportError: cannot import name ‘Tensor’ from ‘mindspore._c_expression’ (/home/HwHiAiUser/.local/lib/python3.9/site-packages/mindspore/_c_expression.cpython-39-aarch64-linux-gnu.so)

用户您好,还请补充一下您使用的环境版本信息,以及脚本信息,方便分析问题。

这个错误通常是mindnlp版本和mindspore版本不匹配造成的,ai pro上mindnlp 0.4.1分支+mindspore 2.5是可以的,不会有这个问题;

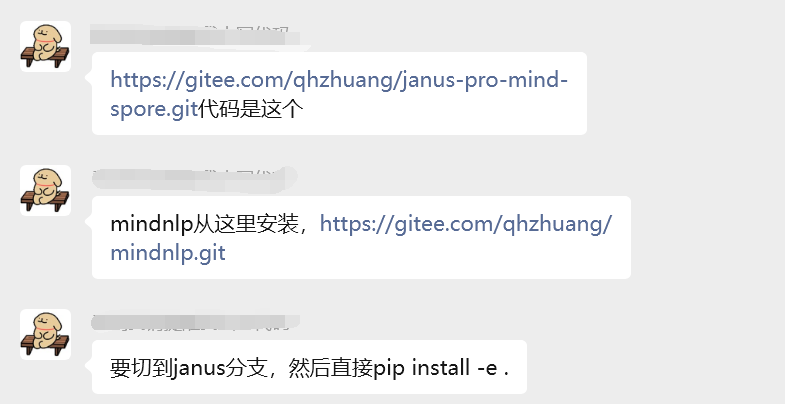

还有,janus模型,在ai pro上有个单独的分支,否则会出错,如果是910b或者310p的话,用0.4.1就可以跑janus:

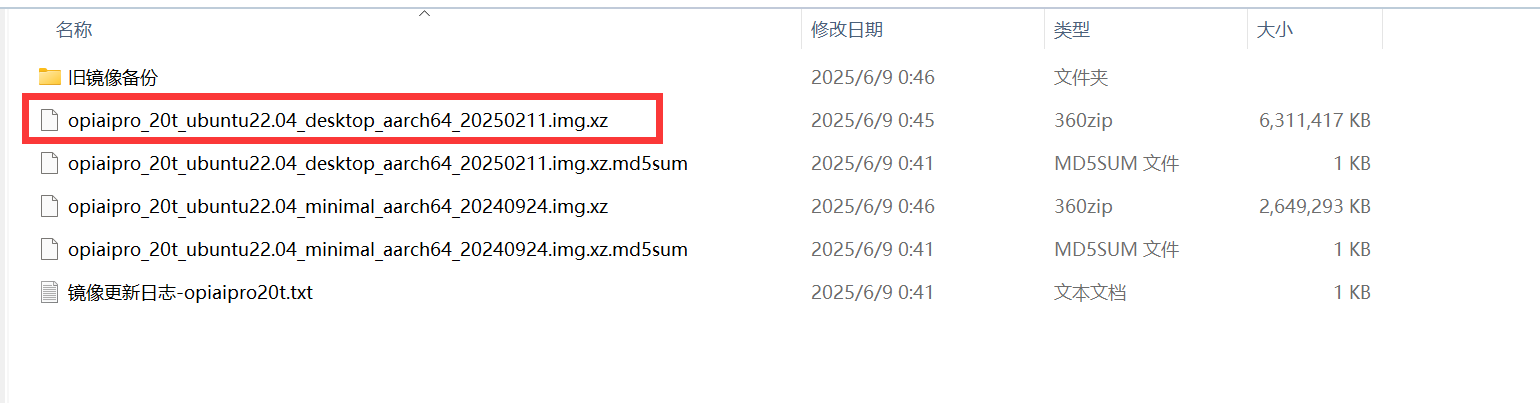

之前根据这个帮忙在ai pro上测试janus跑过,mimdspore 2.4和2.5轻测可以运行;用的是这个镜像,里面自带的cann版本是8.0:

用户您好,MindSpore支撑人已经分析并给出了问题的原因,由于较长时间未看到您采纳回答,这里版主将进行采纳回答的结帖操作,如果还其他疑问请发新帖子提问,谢谢支持~

此话题已在最后回复的 60 分钟后被自动关闭。不再允许新回复。